0%

Prompt Injection

Continue your AI security journey by learning about prompt injection.

0%

Jailbreaking

Explore jailbreaking techniques to bypass an AI model’s safety and policy restrictions.

0%

Prompt Defence

Learn defence measures that can be taken against attacks like prompt injections and jailbreaking.

0%

LLMborghini

Put your indirect prompt injection skills to the test in this AI security challenge.

0%

White Rabbit

Hello, Mr Anderson. Care to put your prompt injection skills to the test?

Topic Rewind Recap

Lock in what you learned with a recap. Earn points and keep your streak.

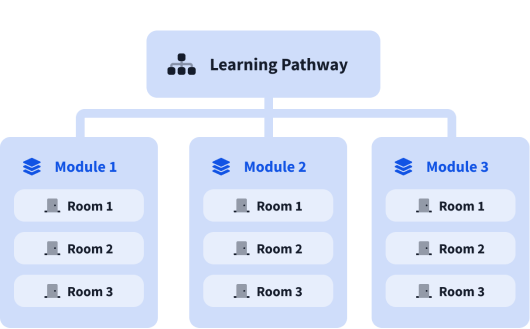

What are modules?

A learning pathway is made up of modules, and a module is made of bite-sized rooms (think of a room like a mini security lab).