To access material, start machines and answer questions login.

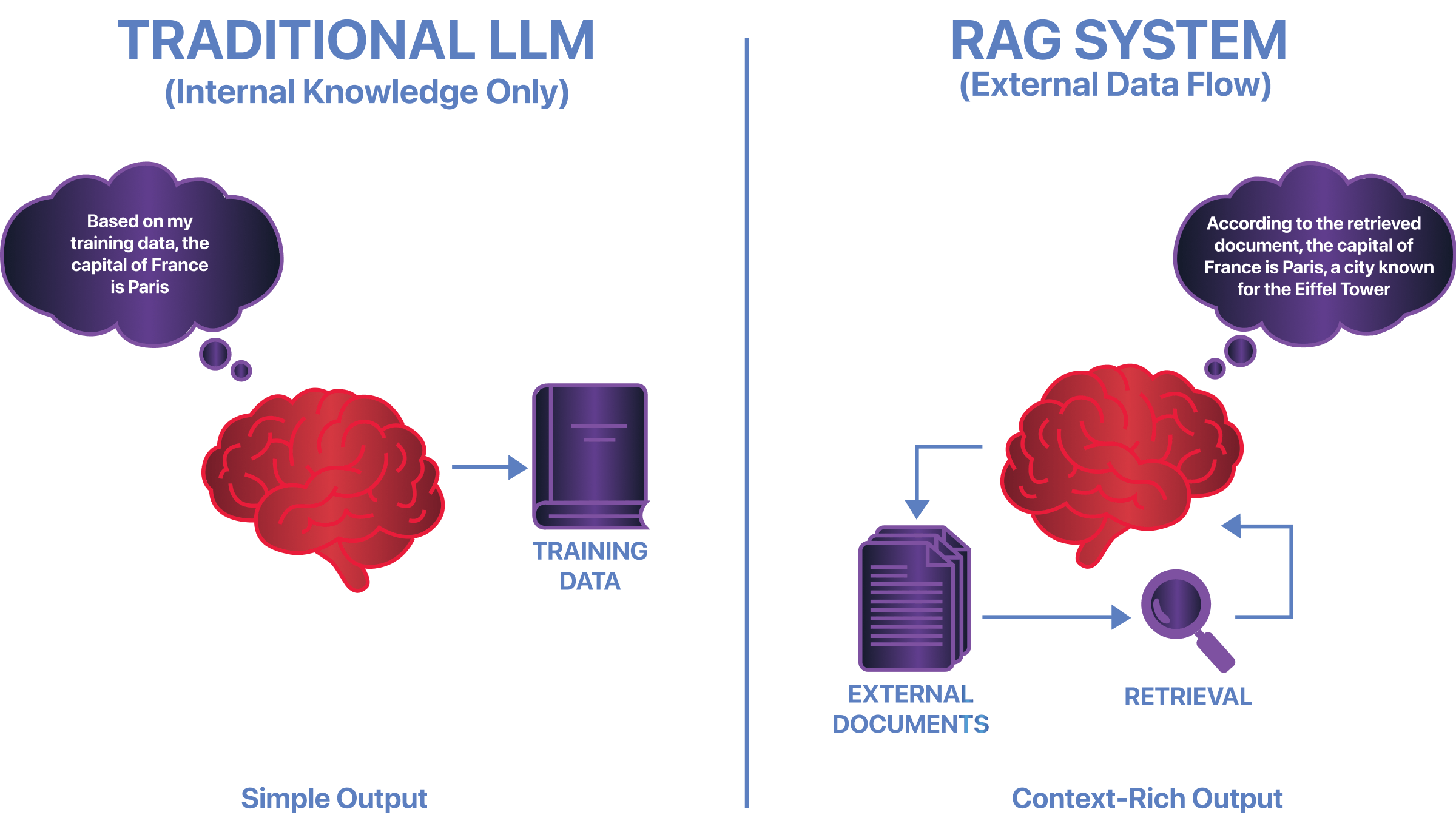

Retrieval-Augmented Generation () allows language models to use external documents when answering questions. Instead of relying solely on , a system retrieves relevant information at inference time and provides it as additional context before generating a response. This improves accuracy and freshness, but it also changes how trust works in the system. This room covers how systems work, where their unique security risks appear, and how attackers exploit retrieval, context injection, and trust boundaries to manipulate model outputs.

Learning Objectives

By completing this room, you will be able to:

- Describe how systems work at a high level

- Explain why retrieval introduces inference-time risks

- Identify concrete security issues specific to

- Understand why traditional security assumptions do not fully apply

Prerequisites

Before starting this room, you should be familiar with:

- What a Large Language Model () is

- How prompts and responses work in systems

- Basic security concepts such as trust boundaries and data

No prior experience is required.

I understand the learning objectives and am ready to learn about RAG security fundamentals!

Ready to learn Cyber Security?

The RAG Security Fundamentals room is only available for premium users. Signup now to access more than 500 free rooms and learn cyber security through a fun, interactive learning environment.

Already have an account? Log in