0%

RAG Security Fundamentals

Learn how RAG systems work and how attackers exploit retrieval, context, and trust boundaries.

0%

Data Poisoning in RAG Systems

Explore how data poisoning alters AI embeddings and retrieval results without visible errors.

0%

Sensitive Information Disclosure

Explore how AI embeddings, retrieval, and weak access controls expose sensitive private data.

0%

UnIndexed

An AI assistant was given access to everything. Nobody checked what "everything" included.

0%

Lockdown

An AI assistant has three open vulnerabilities. Find them. Fix them. Prove the system is secure.

Topic Rewind Recap

Lock in what you learned with a recap. Earn points and keep your streak.

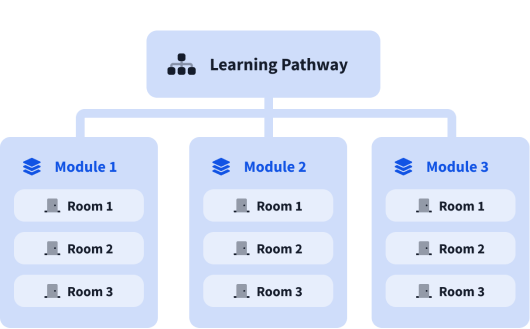

What are modules?

A learning pathway is made up of modules, and a module is made of bite-sized rooms (think of a room like a mini security lab).