To access material, start machines and answer questions login.

- A Versatile Service

helped set apart as a powerhouse in cloud computing. Being able to arbitrarily scale data storage and use it in interactions across services has meant that is deeply entangled in how almost all organizations use . However, that power doesn't come without its own weaknesses - is one of the most commonly abused services in . During this room, you will learn key capabilities and attack surfaces of .

Learning Objectives

Students will learn basic concepts, such as:

- buckets

- objects

- ACLs

- bucket policies

Students will learn about common security issues related to public buckets and how buckets are used in automation. Finally, students will use this knowledge to attack an bucket, exfiltrating data and using the data to further compromise an organization.

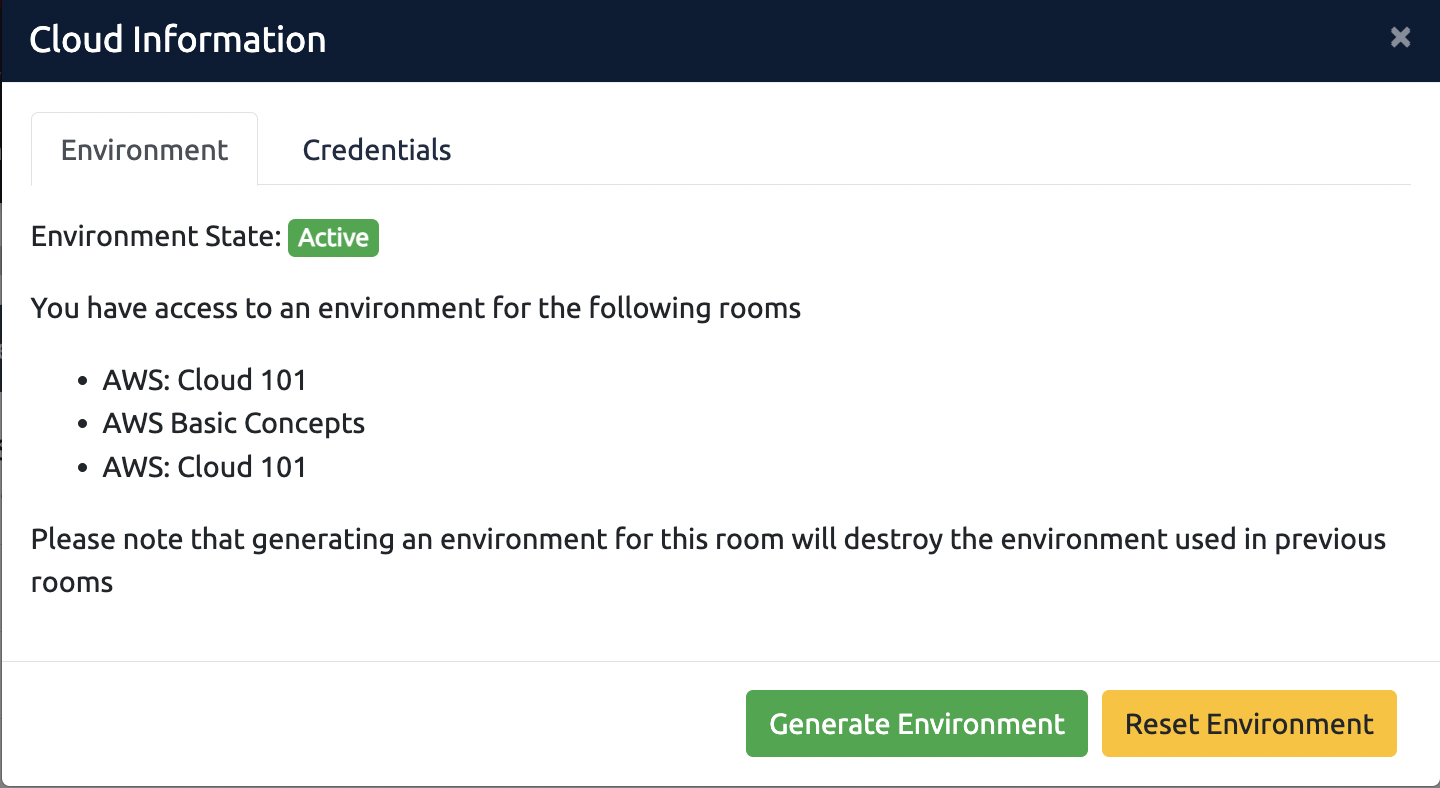

Select the Cloud details button at the top of the room:

Where needed generate the environment required for the room. The "Generate Environment" button will appear if the room contains an environment that needs to be generated.

For any issues with the environment, select the "Reset Environment" button. Review this article for more information.

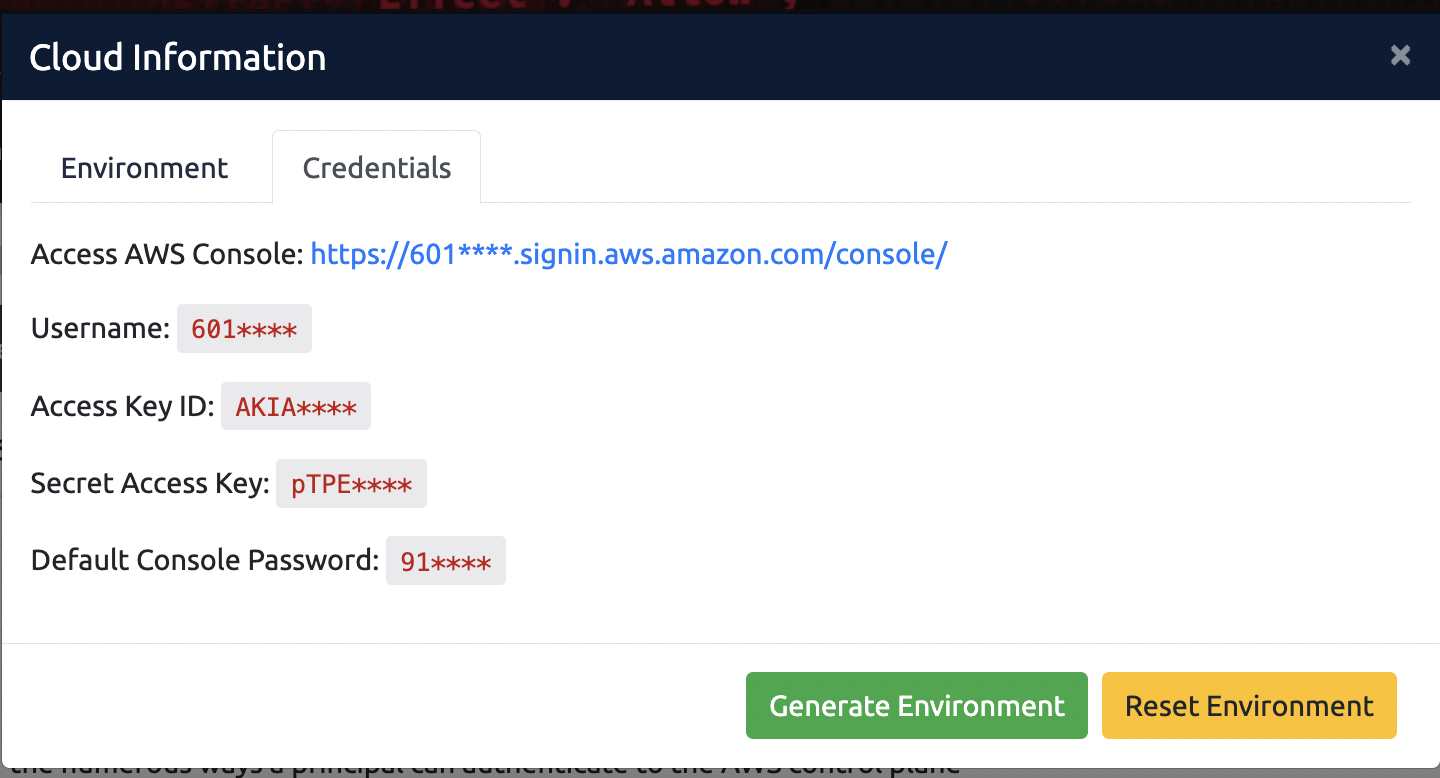

To view the credentials required for the environment, select the credentials tab. You can use these credentials to access the environment in various ways. More information can be found here:

Terms and Concepts

Bucket and Object

Before we start off attacking or other cloud services, it is important to understand that you're not attacking a typical server or workstation for an organization. The analogies to such systems would be incomplete/inaccurate, as is a custom-developed service offered by . Specifically, serves as an "object" or file storage system. Customers can place arbitrary files as large as 5 TB within an "Bucket".

Directory Structure

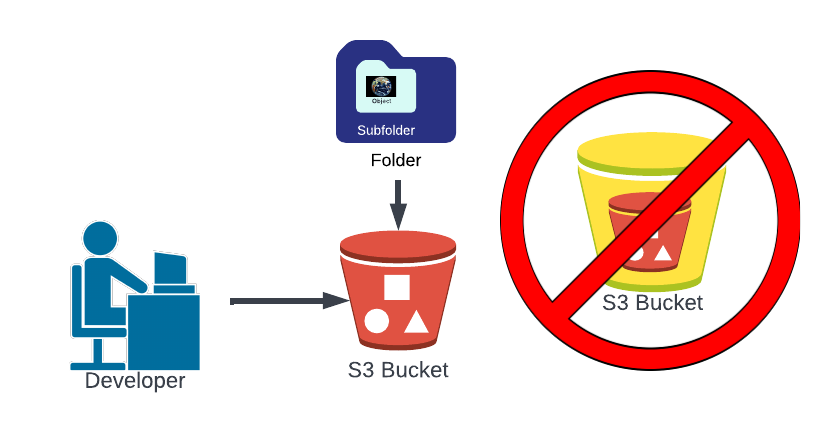

While an Bucket is a file storage system, it has a flat file structure - unlike most other file storage systems. This means that standard UNIX/POSIX behavior associated with file directories is not inherently applied inside buckets. However, as noted by (opens in new tab), the "/" (forward-slash) character can be used to organize objects into a pseudo-hierarchical structure using folders. Objects can be inside "folders" nested inside other "folders", but buckets cannot be nested inside of other buckets.

Object "Durability"

is one of the largest holistic data stores on earth. Such a large data store needs to have guarantees about how trustworthy it is with regard to maintaining stored data. claims the has a durability of 99.999999999%. This means that only loses 1 out of every 100 Billion objects that are stored in , on average. Although, with over 100 Trillion objects stored globally (opens in new tab) - still loses roughly 1000 objects per year.

Access Controls

object permissions also work differently than standard UNIX/POSIX or -based file systems. Permissions to objects consist of a variety of methods which can be restricted using and/or resource-based policies. You can learn more about and Resource-based policies in the Basic Concepts room (Task 3).

The critical permissions associated with objects include s3:GetObject and s3:PutObject. These are equivalent to Read and Write permissions, respectively. Such permissions can be applied to individual objects, a folder, or an entire bucket.

Other Controls

In relation to , there are two additional controls that affect bucket objects.

The first is a concept known as object versioning. If enabled, uploading a new version of a file will not overwrite the original file - but rather preserves a new version of the file for every version uploaded. This can become costly over time, but may be an important security control when using to store automation scripts and other source code. In such situations, versioning serves as a type of source code pinning - and may prevent an attacker from replacing the source code depending on the attacker's permissions.

The second is a concept we are all likely familiar with - encryption. While we have a separate room that covers AWS encryption in-depth, it is important to note that is commonly used with default encryption. This means, frequently, an attacker will need permissions to use the relevant encryption key in order to read the plaintext objects stored in buckets. In most configurations - this won't be a real hurdle for attackers, but it is important to note that buckets are commonly encrypted by default.

Access Control Lists (ACLs)

ACLs are the original method for controlling access to buckets. In fact, ACLs predate the AWS IAM service altogether. A bucket automatically generates an ACL for the bucket on creation (opens in new tab) that provides the Resource Owner with full permissions against the bucket. However, ACLs can be added to expand access to other AWS IAM principals, including AWS services, third-parties with AWS accounts, and even unauthenticated users simply browsing the internet. An ACL might look similar to the following:

<!--?xml version="1.0" encoding="UTF-8"?-->

<accesscontrolpolicy xmlns="http://s3.amazonaws.com/doc/2006-03-01/">

<owner>

<id>79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be</id>

<displayname>wladd@tryhackme.com</displayname>

</owner>

<accesscontrollist>

<grant>

<grantee xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:type="Canonical User">

<id>79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be</id>

<displayname>wladd@tryhackme.com</displayname>

</grantee>

<permission>FULL_CONTROL</permission>

</grant>

</accesscontrollist>

</accesscontrolpolicy>

In this example, the grants "FULL_CONTROL" access to Canonical User ID "79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be". A Canonical User ID is an internal reference ID for any principal. In this case, the Canonical User ID translates to a root account user with the display name "wladd@tryhackme.com". You can find your Canonical User ID using these instructions (opens in new tab) from .

As you can see, ACLs are not very human-reader friendly. improved on ACLs with the "bucket policy", and since November 2021, has recommended that bucket owners disable ACLs (opens in new tab). However, the majority of buckets likely pre-date the ability to disable ACLs and are, therefore, likely to still be enabled.

ACLs also create one of the lesser known security risks in relation to . While "Any Authenticated User" is not an Principal, it is a supported "group" that can be provisioned access in an bucket. In fact, companies such as Shopify have been directly impacted by abuse of this excessive permission (opens in new tab). When configured - anyone with valid credentials can access the associated resources. Let's take a look at bucket policies.

Bucket Policy

Over time, developed and released the "bucket policy" capability. Bucket policies are similar to ACLs - they allow you to control access to buckets, but they follow a more human-readable syntax. For this reason, Bucket Policies are preferred. Notably, bucket policies are the first instance of what became known as "resource-based policies". Whereas Identity-based permissions in are tied to an Principal (opens in new tab); resource-based policies permit direct access to the attached resource. This means that if a policy allows global read and write access, then you would not need credentials to view the data in the bucket or even to add new files to the bucket. Such a policy takes the following form:

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "PublicRead",

"Effect": "Allow",

"Principal": "*",

"Action": [

"s3:GetObject",

"s3:PutObject"

],

"Resource": [

"arn:aws:s3:::my-bucket/*"

]

}

]

}

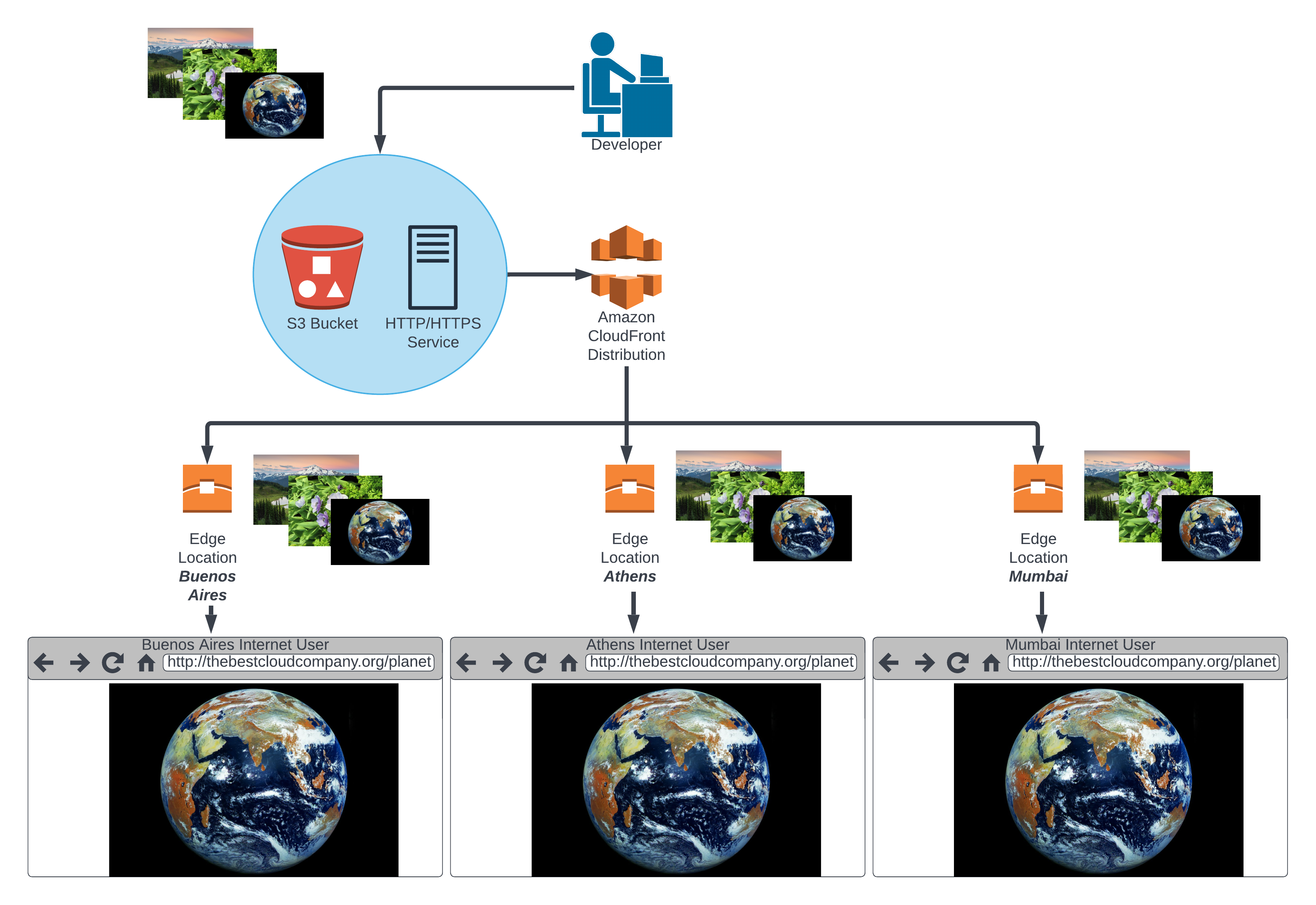

Security

is the Content Distribution Network (CDN) offering. We originally introduced CDNs in the : Cloud 101 (Task 5) room. For those who didn't go through the Cloud 101: room, a CDN is a set of servers distributed around the world and designed to intelligently cache customer content to improve end-user experience. This allows websites to confidently scale traffic by offloading the serving of content that is static/unlikely to change.

In addition to providing latency improvements for end users, has a number of capabilities that enhance the security of -hosted resources. For instance, can be used to place geo-restrictions on access to specific content. can also enforce authorization based on request signature, so that users must authenticate to view sensitive data hosted by . Furthermore, can go so far as to encrypt specific data fields, so that only systems with appropriate rights can unencrypt the field-level data.

also integrates with Web Application (WAF) and Shield/Shield Advanced Distributed Denial-of-Service () protections. These capabilities allow standard protections to be applied to Origins with minimal configuration.

"Origin"

Origins are the resource to be hosted behind . You might use an instance (server) as a Origin and point to the IP address: 10.10.10.10. Similarly, you may store static files in an bucket and serve those files from . While many companies set up dedicated servers and buckets for hosting public content, it's not uncommon to find buckets with mixed uses (hosting public and private content). After all, buckets are a great place to store data of all types. If you're able to find such a "mixed use" bucket as an Origin behind a Distribution, you may be able to pilfer the bucket for interesting information and/or additional access. While not the only thing that might be served behind , buckets are a common choice.

Origins and Bypassing Distributions

While does a fantastic job of caching and serving static content to your users over the internet, there has to be some initial source of that content, or what is known as a " Origin". Origins may point at instances, buckets, Gateway endpoints, Elastic Load Balancers, or a variety of other service capabilities.

When using , expects the customer to restrict access to such origins using "Resource-Based Policy" restrictions or Security Group () Rules. When properly restricted, only the Distribution has permissions to talk to the back-end service/resource, and all users must communicate with the Distribution. Unfortunately - many customers are not aware or do not properly configure such Origin Access Identities (OAIs), and it is common to find Origins that can be accessed directly, bypassing presumed security controls implemented with and exposing additional opportunities for content discovery.

An Origin Access Identity means that only a specific resource will be able to access an bucket behind the Origin. This would be performed by putting the OAI in the bucket policy for the bucket, similar to the below:

{ "Sid": "1", "Effect": "Allow", "Principal": { "AWS": "arn:aws:iam::cloudfront:user/CloudFront Origin Access Identity EAF5XXXXXXXXX" }, "Action": "s3:GetObject", "Resource": "arn:aws:s3:::{your_bucket}/*" }

This policy allows only the OAI to perform s3:GetObject requests against the {your_bucket} S3 bucket. Once this has been configured, users will not be able to directly access the S3 bucket.

How can you find misconfigured CloudFront Origins? It can be a bit of guesswork, but once you've identified that CloudFront is the host for content you've identified, then you can attempt to identify the hosted resource. TryHackMe has an entire room dedicated to learning about Subdomain Enumeration and DNS Reconnaissance. If you are unfamiliar with these techniques, then you should go review that room.

Knowing the domain name, we began by trying to determine where the domain is hosted using our DNS reconnaissance technique.

Certificate Transparency Logs

Crt.sh is one of many public sources where you can identify certificates for DNS domains that are logged. This commonly allows you to identify many subdomains and other domains associated with a particular domain via SSL/TLS certificates. When we search for bestcloudcompany.org - we find a number of records. It appears that at least one subdomain exists for bestcloudcompany.org:

assets.bestcloudcompany.org

Perhaps we can identify additional Best Cloud Company resources using this information.

Analyzing Hosts for Certificate Transparency Log Results

Using the known domains and subdomains, you should perform DNS lookups to identify the host system for those resources:

root@ip-10-10-89-159:~# nslookup bestcloudcompany.org

Server: 10.10.89.159

Address: 10.10.89.159#53

Non-authoritative answer:

Name: bestcloudcompany.org

Address: 44.203.62.152

root@ip-10-10-89-159:~# nslookup 44.203.62.152

Server: 10.10.89.159

Address: 10.10.89.159#53

Non-authoritative answer:

152.62.203.44.in-addr.arpa name = ec2-44-203-62-152.compute-1.amazonaws.com.

It looks like bestcloudcompany.org is hosted at 44.203.62.152. From there - we are able to see it appears that IP address resolves to an instance based on the non-authoritative response. Performing a similar check for assets.bestcloudcompany.org, you should see something similar to the following response:

root@ip-10-10-89-159:~# nslookup assets.bestcloudcompany.org

Server: 10.10.89.159

Address: 10.10.89.159#53

Non-authoritative answer:

Name: assets.bestcloudcompany.org

Address: 143.204.165.84

Name: assets.bestcloudcompany.org

Address: 143.204.165.101

Name: assets.bestcloudcompany.org

Address: 143.204.165.123

Name: assets.bestcloudcompany.org

Address: 143.204.165.5

root@ip-10-10-89-159:~# nslookup 143.204.165.84

Server: 10.10.89.159

Address: 10.10.89.159#53

Non-authoritative answer:

84.165.204.143.in-addr.arpa name = server-143-204-165-84.dfw3.r.cloudfront.net.

It looks like assets.bestcloudcompany.org is hosted by . When we go to assets.bestcloudcompany.org in our browser on the AttackBox - there is an interesting message on the screen. There's a note and it references "this bucket" - could that be an S3 bucket?

Public S3 Buckets - Naming Conventions

One of the first and primary use cases for S3 was to host static content for public websites. Until 2018, a newly created S3 bucket would be publicly available on the internet by default.

This means AWS provides a URL that is publicly accessible with the contents of each bucket. The naming conventions for public S3 buckets continue to this day and follow the below format:

- http://s3.amazonaws.com/bucket/key (for a bucket created in the US East (N. Virginia) region)

- https://s3.amazonaws.com/bucket/key

- http://s3-region.amazonaws.com/bucket/key

- https://s3-region.amazonaws.com/bucket/key

- http://s3.region.amazonaws.com/bucket/key

- https://s3.region.amazonaws.com/bucket/key

- http://s3.dualstack.region.amazonaws.com/bucket/key (for requests using IPv4 or IPv6)

- https://s3.dualstack.region.amazonaws.com/bucket/key

- http://bucket.s3.amazonaws.com/key

- http://bucket.s3-region.amazonaws.com/key

- http://bucket.s3.region.amazonaws.com/key

- http://bucket.s3.dualstack.region.amazonaws.com/key (for requests using IPv4 or IPv6)

- http://bucket.s3-website.region.amazonaws.com/key (if static website hosting is enabled on the bucket)

- http://bucket.s3-accelerate.amazonaws.com/key (where the filetransfer exits Amazon's network at the last possible moment so as to give the fastest possible transfer speed and lowest latency)

- http://bucket.s3-accelerate.dualstack.amazonaws.com/key

- http://bucket/key (where bucket is a DNS (opens in new tab) CNAME record (opens in new tab) pointing to bucket.s3.amazonaws.com)

- https://access_point_name-account ID.s3-accesspoint.region.amazonaws.com (for requests via an access point granting restricted access to a bucket)

Source: Wikipedia

Companies will also commonly follow specific naming conventions for S3 buckets, for instance:

assets.{domain_name}.com.s3.amazonaws.com/

or

{org_name}-prod.s3.amazonaws.com/

Now, not all buckets are public - customers may choose to restrict access to authenticated users, or specific IAM principals. But if a bucket is public, you can easily identify the bucket using basic reconnaissance techniques.

Public S3 Buckets - Search Techniques

We have identified a target organization that we are attempting to infiltrate - Best Cloud Company - and we have identified their primary website, https://www.bestcloudcompany.org. Now it's time to attempt subdomain enumeration to identify potential targets for attack.

Search Engine Indexing

Now that we've found the bucket, aren't you curious if they are hosting anything else interesting in this bucket? Well - though the bucket isn't that large - it could have a few thousand objects. How are we going to search through them all in case this was an accident and it's quickly found? This is where you will want to take advantage of the AWS CLI, which we will explore in the next task.

Because search engines, including Google, index the s3.amazonaws.com subdomain - we can attempt to find public S3 buckets related to the best cloud company organization using the following search syntax in Google:

org: bestcloudcompany site: s3.amazonaws.com

Often, this is one of the easiest ways to validate targets associated with any particular organization. Unfortunately, it doesn't look like Google has indexed any buckets for bestcloudcompany.org.

Web Page Source Inspection

Viewing the source for a web page, you may also find buckets (or other hosting services) embedded as the source for images and other static content hosted on the page. Even further, there are Javascript scripts embedded and referenced in the page source that indicate other external resources for the site. Through these mechanisms, you may often find access to additional resources that may not be intended for public access. You can use tools like LinkFinder (opens in new tab), JSA (opens in new tab), and subjs (opens in new tab) to automate some of this analysis.

Recon

It looks like our targeted organization is hosting assets.bestcloudcompany.org in an bucket - now, what is the name of that bucket?

Why don't we just try assets.bestcloudcompany.org..amazonaws.com - like in the example above?

root@ip-10-10-89-159:~# nslookup assets.bestcloudcompany.org.s3.amazonaws.com

Server: 10.10.89.159

Address: 10.10.89.159#53

Non-authoritative answer:

assets.bestcloudcompany.org.s3.amazonaws.com canonical name = s3-1-w.amazonaws.com. s3-1-w.amazonaws.com canonical name = s3-w.us-east-1.amazonaws.com.

Name: s3-w.us-east-1.amazonaws.com

Address: 52.217.166.169 Now that we've found the bucket, aren't you curious if they are hosting anything else interesting in this bucket? Well - though the bucket isn't that large - it could have a few thousand objects. How are we going to search through them all in case this was an accident and it's quickly found? This is where you will want to take advantage of the . Note: Many, many organizations use buckets to host websites, public documents/files for their website, etc. Many of these organizations also host sensitive information in buckets. Sometimes, a bucket is left public accidentally. Using the above technique, you can still find many such buckets today.

What subdomain did we identify by searching certificates at crt.sh?

Public Buckets - A Long History

As mentioned, a primary use case for is to host public websites. While this is intended behavior by design, it hasn't been without significant security breaches at customers. In fact, customers were so commonly leaving sensitive data in open buckets, changed default behavior from allowing any bucket to be made public by default, to instituting a "Public Access Block" on all newly created buckets across customers. This "Public Access Block" became the default behavior in accounts starting in November 2018 (opens in new tab).

However, the control was not implemented retroactively. Any buckets or associated objects made public prior to November 2018 remain public. Furthermore, customers still make mistakes with bucket permissions in spite of the Public Access Block - cloud security researcher Christophe Tafani-Dereeper (opens in new tab) found that in 2021, there were at least 22 publicly disclosed cybersecurity incidents involving public buckets.

Getting Loot

Even though buckets often contain sensitive information, it doesn't mean that everything in a bucket is sensitive. Furthermore, while individual file (or "objects") uploads are limited to 5 TB; buckets can be arbitrarily large. As an attacker, even if you find an open bucket - you may be searching through a proverbial needle in a haystack.

Using Utilities for Offense

On your attack box, the comes pre-installed. If you are using your own machine, you can download and install the using this guide (opens in new tab) from . Using the , enter the following command to dump the bucket that you have found open:

root@ip-10-10-89-159:~# aws s3 sync s3://{bucket-name} . --no-sign-request

Note: --no-sign-request means that you aren't using credentials to sign the request. This proves that the bucket you are accessing is public.

Now that you have saved the bucket to a local directory, you can use the command line utility "find", to pilfer the bucket for interesting file names and file types. Immediately, though - what is this we have found? Looks like there is at least one binary (.bin) file. Now, you probably don't understand the significance or importance, but let's learn a little more about how allows customers to use before we move on from this bit of information. is the "substrate" for many other services. That means may be used to host source code, automation run-books, and even golden images for storage and automated deployment. Attackers who find buckets in use for these purposes may be able to read or overwrite files in the bucket and gain access to additional resources and privileges.

: Service Substrate

While a primary use case for is public websites - intends for customers to use for a variety of use cases on the platform. CloudFormation (opens in new tab) templates (opens in new tab) and (opens in new tab) functions (opens in new tab) are stored in by whenever you provision CloudFormation or resources. is used to store logs, and if you so choose, logs, and a variety of other logs. Athena is designed to be used with to perform analytics in association with data stored in buckets. The potential use cases are almost limitless.

This sometimes offers unique opportunities to attack other services, simply by modifying the resources in a particular bucket. For example, if you are able to modify a CloudFormation template stored in an bucket, you may be able to trick an end user into deploying malicious resources on your behalf using the CloudFormation service.

If you find a golden image pipeline (opens in new tab), then compromising the base image would lead to a compromised golden image - potentially deployed widely across an organization. This sort of attack chain applies to a variety of services and, given the prevalence of as data storage for other services, is something you should always consider when you determine you have write access to one or many buckets.

Now, let's take a look at that binary file and see what comes of it.

and - A Common Pair of Key Services

is an virtual server offering and one of 's most widely used services. While this room is not geared towards teaching you (see the Amazon - Attack and Defense room for ), is still going to play an important role in this lab. You see, that .bin file that you found with the weird file name? It turns out that it is an lab machine image, known as an Amazon Machine Image (). While most organizations will restrict access and deploy purpose-built buckets to store AMIs, Best Cloud Company has decided to store a backup image in the same bucket that hosts the website - I mean, why not? It is the same image used to host the non-static resources associated with the company's WordPress site.

Pilfering the Image

Before starting our pilfering adventure, we configure using the aws configure command on the AttackBox. We'll specify the Access Key ID and Secret Access Key credentials available by clicking on the orange Cloud Details button in the room, and specifying the Default Region Name as us-east-1.

Now that we've determined the nature of our loot - how do we use it to further our access? Well, you can use the following AWS CLI command to restore the image:

root@ip-10-10-89-159:~# aws ec2 create-restore-image-task --object-key {AMI_Object_ID} --bucket assets.bestcloudcompany.org --name {Unique_Name}

This command generates a new Amazon Machine Image, or , from the binary file we found in the S3 bucket. An is another form of Lab Machine image, similar to OVF (Open Virtualization Format) or VMDK (Lab Machine Disk File). Once we have the newly generated ID, we can re-create the workload and see what loot we've collected:

root@ip-10-10-89-159:~# aws ec2 create-key-pair --key-name {Your_Key_Name} --query "KeyMaterial" --output text > ~/.ssh/bestkeys.pem

This command creates an -generated key pair - once you generate the key, maintains the Public Key for use with instances. Take the Private Key material and add it to your configuration - we will be using that in a minute.

Next - you will need to find the relevant subnet where you can deploy your instance:

root@ip-10-10-89-159:~# aws ec2 describe-subnets

You can use any subnet that has a Tag value of "SubnetA". Next, we are going to launch an instance from the restored AMI. To do that, we'll need to use a security group that allows SSH access, in order for us to be able to SSH into the image we found. You will know you have the correct security group because the description field will read "Allow SSH Access for TryHackMe S3 Room".

root@ip-10-10-89-159:~# aws ec2 describe-security-groups

Now, use that Security Group ID to launch your instance using the you identified.

root@ip-10-10-89-159:~# aws ec2 run-instances --image-id {ID_From_Restored_Image} --instance-type t3a.micro --key-name {Your_Key_Name} --subnet-id {SubnetA_SubnetID} --security-group-id {S3Room_TryHackMe_Security_Group}

Check in the Dashboard of the Console, after a few moments you should see your new instance and the associated public IP address. Once the server is finished initializing (typically 1-5 minutes), you should be able to find the public IP address for your instance in the Dashboard. How about trying to connect to the server you just generated?

root@ip-10-10-89-159:~# ssh -i "{Your_Private_Key}.pem" root@{EC2_Public_IP_Address}

Once logged in to the instance, you can look around for interesting information. Notice anything interesting? It turns out the image includes a username and password for a default user...how might we use this?

What is the username for the default WordPress user on the AMI we identified?

What is the password for the default WordPress user on the AMI we identified?

What is the flag in the WordPress profile of the user?

Summary

is a critical service for customers and as you see - compromising can have devastating consequences. During this room, you've learned about the key concepts and terms associated with . Surveying common security weaknesses associated with the use of , we gained an understanding of how attackers may use , culminating in our own abuse of and compromise of WordPress database credentials.

Ready to learn Cyber Security?

TryHackMe provides free online cyber security training to secure jobs & upskill through a fun, interactive learning environment.

Already have an account? Log in