Secure AI Systems

Understand how AI systems are architected and how to assess their security posture.

This module explores AI systems as an attack surface, covering secure architectural design principles and how to identify weaknesses at the system integration layer. Learners examine LLM-specific security concerns, apply threat modelling frameworks like STRIDE and OWASP in AI contexts, and practice attack surface discovery when AI components are present. The module concludes with a static site exercise where learners put their AI threat modelling skills to the test.

0%

Securing AI Systems

Map AI architecture, identify OWASP/ATLAS attack surfaces, and apply secure design to trust boundaries.

0%

LLM Security

Understand LLMs as an attack surface and get an overview of LLM security threats.

0%

AI Threat Modelling

Assess and mitigate enterprise AI/ML risks via systematic, defender-focused auditing.

0%

AI System Reconnaissance

Discover AI infrastructure by scanning ML services, frameworks, and extracting metadata from APIs.

0%

AI Threat Modelling Assessment

Put your AI threat modelling skills to the test using an interactive assessment application.

Topic Rewind Recap

Lock in what you learned with a recap. Earn points and keep your streak.

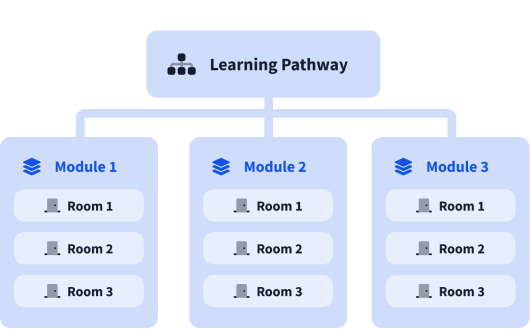

What are modules?

A learning pathway is made up of modules, and a module is made of bite-sized rooms (think of a room like a mini security lab).